First month for free!

Get started

Published 10/25/2025

Real-time transcription is all about turning spoken words into text, right as they're being said. Think of it as a lightning-fast digital stenographer, capturing a conversation live and displaying it on a screen. This makes information available, searchable, and usable in the moment.

Picture a world where every word spoken is instantly captured, understood, and turned into valuable data. That’s not science fiction—it's what real-time transcription makes possible today. We've moved way beyond simple dictation; this is about creating a fluid connection between what we say and the digital text we can work with.

The impact is huge. For businesses, it means getting live meeting notes without anyone having to type furiously, or gaining immediate insights from customer service calls. In the media world, it's the engine behind live captions on broadcasts, opening up content to millions. The concept is simple, but its uses are incredibly far-reaching.

The need for instant, accurate documentation is exploding. With so many of us working remotely or in hybrid setups, having a reliable record of what was said in virtual meetings is no longer a "nice-to-have"—it's crucial for keeping everyone on the same page. This shift is fueling massive growth in the market.

The U.S. transcription services market, which is a great indicator for real-time services, was valued at around $30.42 billion in 2024. This number really drives home the enormous demand for turning speech into text across all sorts of industries.

But this growth isn't just about convenience. It’s about unlocking the value hidden inside spoken conversations. When you can convert live audio into structured data, you can spot trends, check for compliance, and make your operations more efficient, all in real time. For a deeper dive, you can explore the latest transcription market trends and see where the technology is headed.

At its core, real-time transcription is about knocking down communication barriers to create a more inclusive and productive world. It gives people and organizations the power to do some amazing things.

In this guide, we'll pull back the curtain on the technology that powers all this, look at its most effective applications, and show you how to start using it yourself.

Ever wonder what’s happening behind the scenes when you see words appear on a screen as you speak? It feels like magic, but it’s really just an incredibly fast and sophisticated process. Think of it like a digital stenographer working at the speed of sound.

It all starts the moment your voice hits a microphone.

The system first has to capture and segment the audio. Your microphone turns your voice into a digital audio stream, but the AI doesn't wait for you to finish your sentence to get started. Instead, it immediately chops that stream into tiny, bite-sized chunks, often just a few milliseconds long. This is the secret to its speed—by working on small pieces continuously, it avoids getting bogged down.

This constant flow of audio data is the fuel for the entire process. Each little segment is then broken down even further to isolate its unique acoustic properties.

With the audio neatly chunked, the system gets to the core task: feature extraction. It analyzes the specific sound patterns in each chunk—the unique frequencies, tones, and vibrations that form human speech. These patterns are then translated into a numerical format, a language the AI can actually process. It's a bit like a musician identifying individual notes within a complex chord.

This numerical data is then pushed into a powerful AI model, usually a neural network that has been trained on thousands of hours of spoken language. The model acts like a master linguist, rapidly comparing the incoming sound patterns to its massive internal library. It predicts the most probable sequence of phonemes—the smallest units of sound in a language, like the "c," "a," and "t" sounds in "cat."

These phonemes are then stitched together into words, forming the first draft of the transcript.

This entire workflow, from sound hitting the mic to text appearing on screen, runs in a continuous, lightning-fast loop. As new audio chunks arrive, the model constantly updates and refines its predictions. It might even correct a word it transcribed a second ago as it gains more context from what you say next. That's why you sometimes see words flicker and change in a live transcript.

The last step is all about making the raw text clean and readable. The AI applies another layer of intelligence to polish the output on the fly.

This entire cycle repeats itself over and over, delivering a steady stream of text with a delay, or latency, of just a few hundred milliseconds. The end result is a seamless experience where spoken words are turned into a searchable, permanent record almost as fast as they leave your mouth.

We've come a long way from human stenographers painstakingly typing out every word. The jump to automated systems isn't just an improvement; it’s a whole new ballgame, powered by artificial intelligence. Today’s real-time transcription gets its incredible speed and precision from deep learning models and large language models (LLMs) that act like a digital brain.

This AI engine doesn't just match sounds to dictionary words. It adds layers of intelligence that come surprisingly close to human understanding, which makes the final transcript genuinely useful. These models learn from massive datasets of audio and text, training them to pick up on the subtleties of human speech with impressive accuracy.

What really makes modern AI transcription stand out are the advanced features that were once pure science fiction for an automated system. These aren't just bells and whistles; they transform a raw, messy stream of text into a structured, coherent record.

Here are a few of the key AI-driven enhancements:

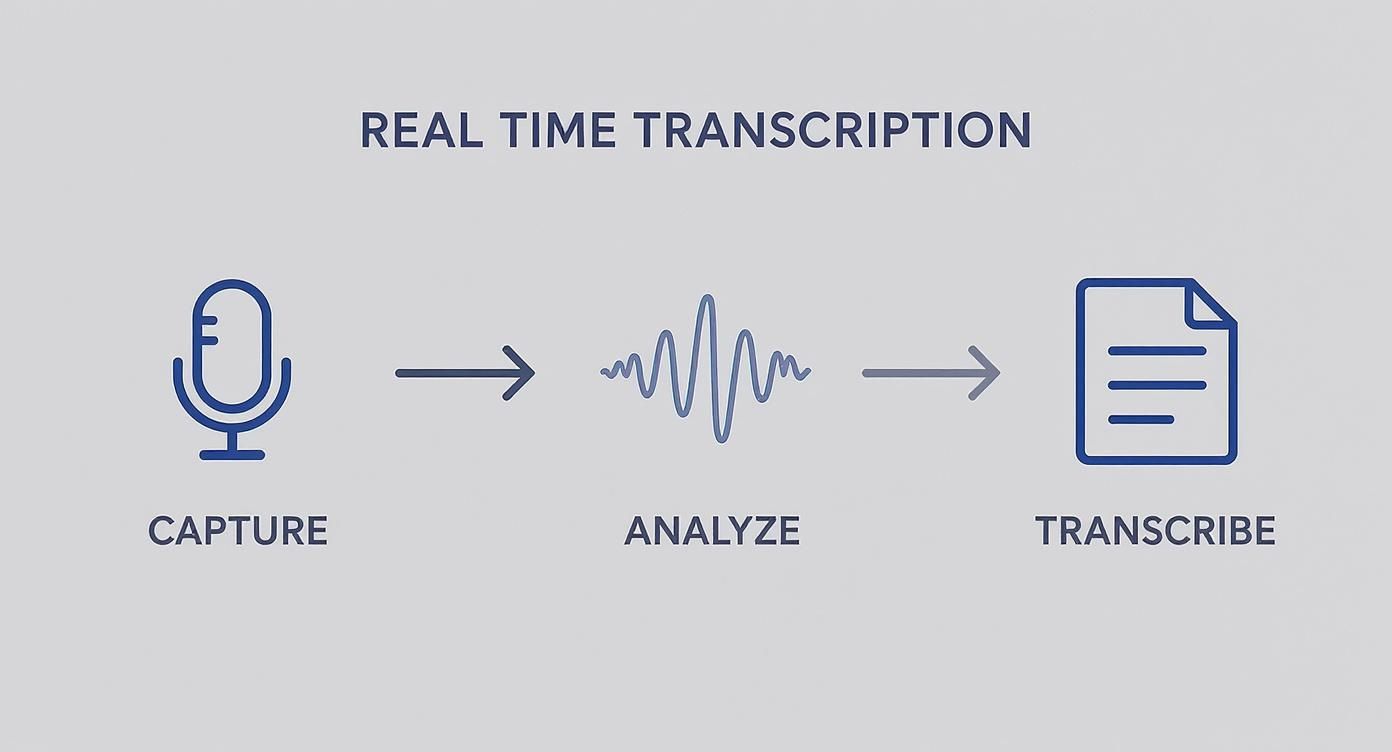

The infographic below shows how this all comes together, from the moment audio is captured to the instant text appears on your screen.

This simple three-step cycle—capture, analyze, and transcribe—runs continuously, delivering text in the blink of an eye.

It's no surprise that the demand for these smart transcription services is through the roof. As more companies embrace remote and hybrid work, the need for a reliable, instant record of conversations has shot up the priority list.

The global market for AI-powered real-time transcription is on a tear, projected to rocket from $4.5 billion in 2024 to $19.2 billion by 2034. That’s a compound annual growth rate (CAGR) of 15.6%.

This growth isn't just a number; it shows how fundamental this technology is becoming for day-to-day collaboration and productivity. North America is leading the charge, accounting for a 35.2% share of the market in 2024. If you want to dig deeper into the numbers, you can explore the full AI transcription market report.

What was once a niche, expensive service is now a scalable and affordable tool for just about any business out there.

https://www.youtube.com/embed/BsojaA1XnpM

The technology behind real-time transcription is fascinating, but its true magic is in solving real-world problems. This isn't just about turning spoken words into text; it's about what that instant text unlocks. Across countless industries, this capability is making a huge difference by boosting accessibility, efficiency, and accuracy right when it matters.

From global live streams to private legal depositions, the applications are incredibly diverse and impactful. Each use case solves a specific pain point and delivers real, measurable value, changing how we all communicate and get work done.

One of the biggest wins for real-time transcription is making live content accessible to everyone. For broadcasts, webinars, and hybrid events, live captions have gone from a "nice-to-have" feature to an absolute must for inclusion.

Picture a huge virtual conference. You've got attendees in noisy coffee shops, others who are hard of hearing, and some who just process text better than audio. A live transcript ensures no one misses a single crucial point. This keeps everyone locked in and helps bridge the gap between people in the room and those joining remotely, creating one unified experience.

"When every word is captured and displayed as text, no one misses a thing—whether they’re battling background noise, dealing with poor audio, or struggling with an accent. It’s not just about accessibility; it’s about making sure every attendee, no matter where they are, stays engaged and informed."

Having that immediate text record also means people can quickly search for a specific term or review a point that was just made, all without interrupting the speaker.

Beyond public events, real-time transcription is a game-changer in professional settings where speed and precision are non-negotiable.

In these environments, transcription isn't just for notes later; it’s an active tool that helps people do their jobs better in the moment.

The medical field has jumped on this technology to solve one of its biggest headaches: administrative overload. Doctors and nurses spend hours on clinical documentation, which contributes to burnout and pulls them away from patient care. Real-time transcription helps automate a huge chunk of that work.

As a doctor talks with a patient, the conversation is transcribed instantly, filling out electronic health records (EHR) with accurate notes. This is a lifesaver in telehealth, where clear documentation is vital for providing continuous care. The U.S. medical transcription market is projected to hit $3.3 billion in 2025 and is expected to soar past $5.1 billion by 2034, mostly because of the urgent need to cut down on these administrative tasks. You can find more data on the medical transcription market's growth on dittotranscripts.com.

The table below breaks down how different industries are putting this technology to work and the specific advantages they're seeing.

| Real Time Transcription Applications Across Industries | | :--- | :--- | :--- | | Industry | Primary Use Case | Key Benefit | | Education | Live classroom lectures & virtual learning | Improved accessibility for students with disabilities and better comprehension for all. | | Media & Entertainment | Live closed captions for broadcasts & events | Ensures compliance with accessibility laws and broadens audience reach. | | Call Centers | Real-time agent assistance & compliance monitoring | Boosts agent performance with live prompts and guarantees adherence to scripts. | | Legal | Depositions, court reporting & hearings | Creates an immediate, searchable record for attorneys to reference during proceedings. | | Finance | Earnings calls & investor briefings | Provides instant, accurate transcripts for analysts, investors, and regulatory compliance. |

As you can see, the applications are incredibly broad. The common thread is that real-time transcription provides immediate textual data that empowers people and streamlines operations in ways that simply weren't possible before.

Picking a transcription service isn't always straightforward. With so many options on the market, it's easy to get lost in the marketing noise. The trick is to look past the buzzwords and zero in on a few core metrics that actually matter.

Think of it this way: are you looking for a simple, ready-made tool, or do you need the raw power to build something new? A standalone app might work for transcribing a meeting here and there, but a real time transcription API gives developers the building blocks to integrate that same power directly into their own products. It's about having total control over the experience you create for your users.

When you start comparing services, you'll find that four things are truly non-negotiable. These benchmarks will tell you almost everything you need to know about how a service performs in the real world.

Accuracy (Word Error Rate): This is the big one. Word Error Rate (WER) is the industry standard for measuring accuracy—it counts the number of mistakes a system makes compared to the total number of words spoken. A lower WER is always better, and the best services can achieve a WER below 5% in good conditions.

Latency: How long does it take for spoken words to appear as text? That delay is latency. For things like live captions or real-time agent assistance, you need this to be under one second. Anything more, and the experience starts to feel clunky and out of sync.

Scalability: Can the system keep up when things get busy? A solid platform needs to handle everything from a single user to thousands of simultaneous audio streams without breaking a sweat or letting performance slip.

Language Support: This one’s simple but crucial. Does the service support the languages and dialects your users actually speak? A platform built for a global audience needs to have a deep library of languages to be truly effective.

The sweet spot is finding a solution that offers a low WER and minimal latency without forcing you to compromise on either. For developers, this means an API that's not just accurate, but fast and reliable enough to build a flawless user experience on top of it.

Ultimately, the "best" choice really depends on what you're trying to accomplish. If you just need a tool for personal use, a pre-built app will probably do the job just fine.

But if you're a developer looking to add powerful transcription features to your own application, or a business trying to weave transcription into your existing workflows, a developer-first API like Lemonfox.ai is the way to go. An API gives you direct access to the transcription engine, letting you create completely custom solutions without having to build the complex AI models yourself. It’s all about getting maximum flexibility to innovate and scale on your own terms.

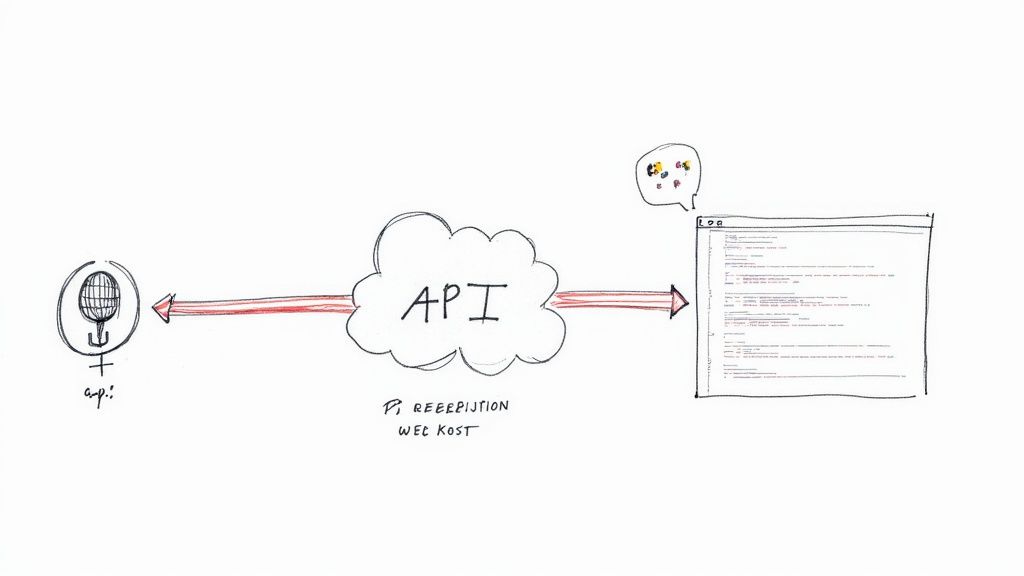

If you're a developer looking to build real-time transcription directly into your own products, an API is your best friend. It’s the most direct and powerful way to get the job done without getting bogged down in building and maintaining your own complex AI infrastructure. Think of it as plugging into a ready-made, high-performance speech-to-text engine with just a few lines of code.

Instead of a one-size-fits-all app, a developer API gives you the fundamental building blocks to create something truly custom. The whole process hinges on creating a stable, two-way connection to the transcription service. This is almost always handled with a WebSocket, which is perfect for continuously streaming live audio and getting text back almost instantly.

Once you've established that secure connection, your application begins feeding it a stream of audio data. The API on the other end grabs these tiny audio chunks, processes them on the fly, and sends back structured data—usually in a clean JSON format. This isn't just a wall of text; it typically includes the transcript, precise timestamps, and even who said what (speaker labels).

From a bird's-eye view, the steps are pretty straightforward:

Good API documentation, like what you’ll find for the Lemonfox.ai API, will walk you through this process with clear, copy-paste-ready examples.

This screenshot gives you a peek at what that structured data looks like. You get the final transcript along with word-level timestamps, which is exactly what you need to build cool, interactive features on top of the text.

Ultimately, this approach gives you complete creative control. You decide exactly how the real-time transcription looks and feels, allowing you to craft a perfectly seamless and branded experience for your users.

If you're thinking about using real-time transcription, you probably have a few questions. It’s a big step, so getting clear, honest answers is the best way to figure out if this technology is the right fit for your work. Let’s tackle some of the most common ones we hear.

This is usually the first question on everyone's mind. The best AI transcription services can hit over 95% accuracy in perfect conditions—think crystal-clear audio with no background noise. In the industry, we measure this with something called Word Error Rate (WER), where a lower number is better.

But here’s the reality: performance in the real world depends on a lot. Things like background chatter, heavy accents, or a poor microphone connection can all affect the results. That's why I always tell people to test any service with their own audio first. It’s the only way to get a true feel for how it will perform for your specific needs before you go all in.

A valid concern, especially with sensitive conversations. Any provider worth their salt puts security front and center. They should be using end-to-end encryption, which means your data is scrambled and protected both while it's being sent and when it's stored.

If you're in a field like healthcare or finance, dig a little deeper. Look for compliance with standards like GDPR or HIPAA. Seeing those certifications is a good sign that the company takes data privacy seriously and follows strict security rules. It’s about peace of mind.

The main difference boils down to one thing: speed.

Real-time transcription is all about a "now" result. It turns speech into text almost instantly, as the words are being spoken. This is exactly what you need for live events, closed captioning, or giving a customer service agent live feedback during a call.

Batch transcription, on the other hand, works with pre-recorded files. You upload an audio or video file, and the system processes it, delivering the full transcript a bit later. It’s the perfect choice when you don't need the text immediately—think transcribing meeting recordings or interviews for documentation.

Ready to build with a fast, accurate, and affordable transcription API? Lemonfox.ai offers a developer-first platform with transparent pricing and robust features. Start your free trial and get 30 hours of transcription today.